Introduction to Content Switching - Application & Virtual Server Load Balancing via Deep Packet Inspection

Content switches (also sometimes called application switches) is a class of network device that is becoming increasingly common in medium to large sized data centres and web-facing infrastructures.

The devices we traditionally call switches work at Layer 2 of the OSI model and simply direct incoming frames to the appropriate exit port based on their destination MAC address. Content switches, however, also inspect the contents of the data packet all the way from Layer 4 right up to Layer 7 and can be configured to do all sorts of clever things depending on what they find.

An increasing number of vendors are offering these products. Cisco’s CSM (Content Switching Module) and ACE (Application Control Engine) module will slot into its 6500 Series switches and 7600 Series routers and Cisco provides standalone appliances such as the ACE 4710. F5 Networks is another major contender with its BigIP LTM (Local Traffic Manager) and GTM (Global Traffic Manager) range of appliances.

An increasing number of vendors are offering these products. Cisco’s CSM (Content Switching Module) and ACE (Application Control Engine) module will slot into its 6500 Series switches and 7600 Series routers and Cisco provides standalone appliances such as the ACE 4710. F5 Networks is another major contender with its BigIP LTM (Local Traffic Manager) and GTM (Global Traffic Manager) range of appliances.

In functional terms what you get here is a dedicated computer running a real OS (the BigIP LTMs run a variant of Linux) with added hardware to handle the packet manipulation and switching aspects. The content switching application, running on top of the OS and interacting with the hardware, provides both in-depth control and powerful traffic processing facilities.

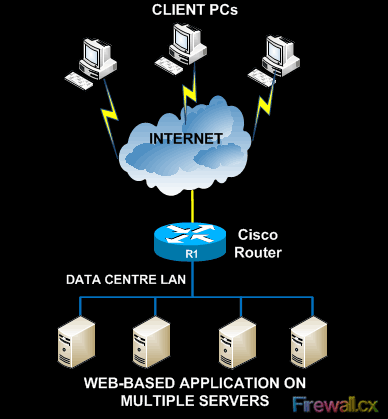

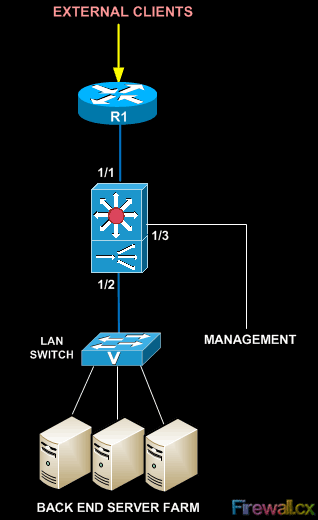

So what can these devices do? We’ll consider that by looking at an example. Suppose you have a number of end-user PCs out on the internet that need to access an application running on a server farm in a data centre:

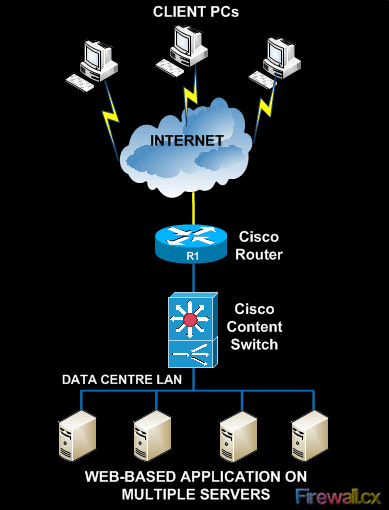

Obviously you have a few things to do here. Firstly you need to somehow provide a single ‘target’ IP address for those users to aim at, as opposed to publishing all the addresses of all the individual servers. Secondly you need some method of routing all those incoming sessions through to your server farm and sharing them evenly across your servers while still providing isolation between your internal network and the outside world. And, thirdly, you need it to be resilient so it doesn’t fall over the moment one of your servers goes off-line or something changes. A content switch can do all of this for you and much more:

Let’s look at each of these aspects in more detail.

Virtual Servers

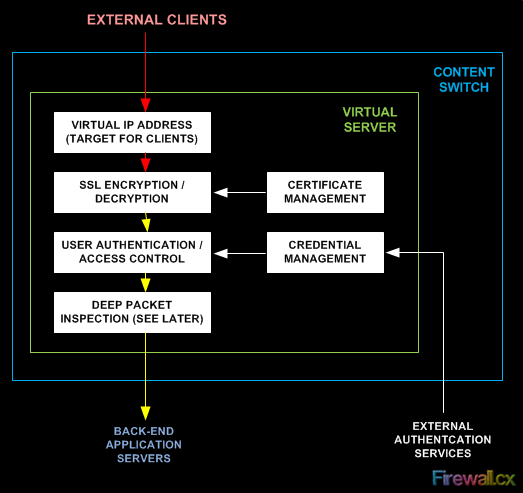

This is one of the most basic things you can do with these devices. On your content switch you can define a virtual server which the switch will then ‘offer’ to the outside world. This is more, though, than just a virtual IP address - you can specify the ports served, the protocols accepted, the source(s) allowed and a whole heap of other parameters. And because it’s your content switch the users are accessing now, you can take all this overhead away from your back-end servers and leave them to do what they do best - serve up data.

For example, if this is a secure connection, why make each server labour with the complexities of certificate management and SSL session decryption? Let the content switch handle the SSL termination and manage the client and server certificates for you. This reduces the server load, the application complexity and your administration overhead. Do you need users to authenticate to gain access to the application? Again, do it at the virtual server within the content switch and everything becomes much easier. Timeouts, session limits and all sorts of other things can be defined and controlled at this level too.

Load Balancing

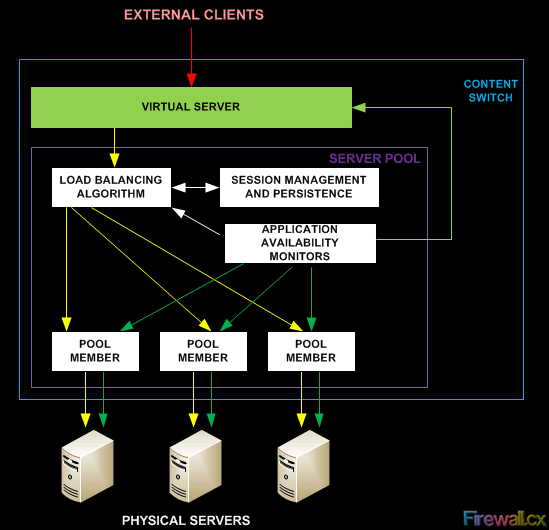

Once our users have successfully accessed the virtual server, what then? Typically you would define a resource pool (of servers) on your content switch and then define the members (individual servers) within it. Here you can address issues such as how you want the pool to share the work across the member servers (round robin or quietest first), and what should happen if things go wrong.

This second point is important – you might need two servers as a minimum sharing the load to provide a good enough service to your users, but what happens if there’s only one? And what happens if a server goes down while your service is running? You can take care of all this inside the content switch within the configuration of your pool. For example, you could say that if there is fewer than two servers up then the device should stop offering the virtual service to new clients until the situation improves. And you can set up monitors (Cisco calls them probes) so that the switch will check for application availability (again, not just simple pings) across its pool members and adjust itself accordingly. And all this will happen automatically while you sit back and sip your coffee.

You also need to consider established sessions. If a user opens a new session and their initial request is handled by server 1, you need to make sure that all subsequent communications from that user also go to server 1 as opposed to servers 2 or 3 which have no record of the data the user has already entered. This is called persistence, and the content switch can handle that for you as well.

Deep Packet Inspection

Content switches do all the above because they inspect the contents of incoming packets right up to Layer 7. They know the protocol in use, for example, and can pull the username and password out of the data entered by the user and use those to grant or deny access. This ability unleashes the ultimate power of the device – you can inspect the whole of the packet including the data and basically have your switch do anything you want.

Perhaps you feel malicious and want to deny all users named “Fred”. It’s a silly example, but you could do it. Maybe you’d like the switch to look in the packet’s data and change every instance of “Fred” to “idiot” as the data passes through. Again, you could do it. The value of this becomes clearer when you think of global enterprises (Microsoft Update is a prime example) where they want to know, perhaps, what OS you’re running or which continent you’re on so you can be silently rerouted to the server farm most appropriate for your needs. Your content switch can literally inspect and modify the incoming data on the fly to facilitate intelligent traffic-based routing, seamless redirects or disaster recovery scenarios that would be hard to achieve by conventional means. Want to inspect the HTTP header fields and use, say, the browser version to decide which back-end server farm the user should hit? Want to check for a cookie on the user’s machine and make a routing decision based on that? Want to create a cookie if there isn’t one? You can do all of this and more.

With the higher-end devices this really is limited only by your creativity. For example, the F5 and Cisco devices offer a whole programming language in which you can implement whatever custom processing you need. Once written, you simply apply these scripts (called I-Rules on the F5) at the appropriate points in the virtual server topology and off they go.

Scalability

What happens if you suddenly double your user base overnight and now need six back-end servers to handle the load instead of three? No problem – just add three more servers into the server pool on your content switch, plug them into your back-end network and they’re ready to go. And remember all you need here are simple application servers because all the clever stuff is being handled for them by the content switch. With an architecture like this server power becomes a commodity you can add or remove at will, and it’s also very easy to make application-level changes across all your servers because it’s all defined in the content switch.

Topologies

How might you see a content switch physically deployed? Well, these are switches so you might well see one in the traditional ‘straight through’ arrangement:

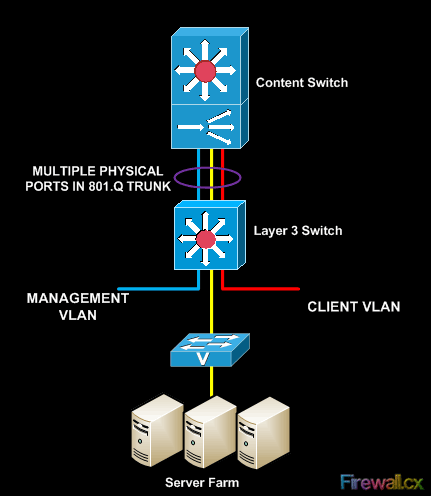

Or since they are VLAN aware you might also see a ‘content-switch-on-a-stick’:

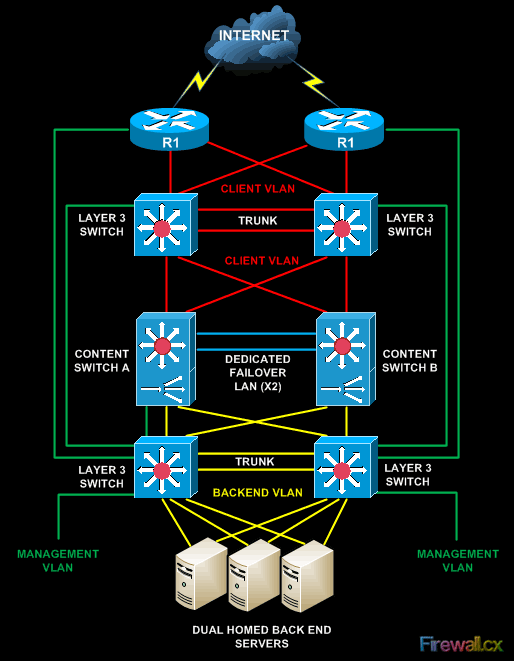

You’ll also often find them in resilient pairs or clusters offering various failover options to ensure high availability. And it’s worth pointing out here that failover means just that – the session data and persistence information is constantly passed across to the standby so that if failover occurs even the in-flight sessions can be taken up and the end users won’t even notice.

And, finally, you can now even get virtual content switches that you can integrate with other virtual modules to provide a complete application service-set within a single high-end switch or router chassis. Data centre in a box, anyone?

Summary

Content switches go far beyond the connectivity and packet-routing services offered by traditional layer 2 and 3 switches. By inspecting the whole packet right up to Layer 7 including the end-user data they can:

- Intelligently load balance traffic across multiple servers based on the availability of the content and the load on the servers.

- Monitor the health of each server and provide automatic failover by re-routing user sessions to the remaining devices in the server farm.

- Provide intelligent traffic management capabilities and differentiated services.

- Handle SSL termination and certificate management, user access control, quality-of-service and bandwidth management.

- Provide increased application resilience and improve scalability and flexibility.

- Allow content to be flexibly located and support virtual hosting.

Further information

Links to Cisco webpages

http://www.cisco.com/en/US/prod/collateral/contnetw/ps5719/ps7027/Data_Sheet_Cisco_ACE_4710.html

http://www.cisco.com/en/US/products/ps6906/index.html

http://www.cisco.com/en/US/prod/collateral/modules/ps2706/ps6906/prod_brochure0900aecd804585e5.pdf

Links to F5 webpages

http://www.f5.com/products/big-ip/big-ip-local-traffic-manager/overview/

http://www.f5.com/pdf/products/big-ip-platforms-datasheet.pdf

Your IP address:

216.73.216.214

Wi-Fi Key Generator

Follow Firewall.cx

Cisco Password Crack

Decrypt Cisco Type-7 Passwords on the fly!