Key Features of a True Cloud-Native SASE Service. Setting the Right Expectations

Secure Access Service Edge (SASE) is an architecture widely regarded as the future of enterprise networking and security. In previous articles we talked about the benefits of a converged, cloud-delivered, SASE service which can deliver necessary networking and security services to all enterprise edges. But what does "cloud delivered" mean exactly? And are all cloud services the same?

Secure Access Service Edge (SASE) is an architecture widely regarded as the future of enterprise networking and security. In previous articles we talked about the benefits of a converged, cloud-delivered, SASE service which can deliver necessary networking and security services to all enterprise edges. But what does "cloud delivered" mean exactly? And are all cloud services the same?

We’ll be covering the above and more in this article:

Related Articles:

- Complete Guide to SD-WAN. Technology Benefits, SD-WAN Security, Management, Mobility, VPNs, Architecture and more

- How To Secure Your SD-WAN. Comparing DIY, Managed SD-WAN and SD-WAN Cloud Services

- SASE and VPNs: Reconsidering your Mobile Remote Access and Site-to-Site VPN strategy

- Converged SASE Backbone – How Leading SASE Provider, Cato Networks, Reduced Jitter/Latency and Packet Loss by a Factor of 13!

Defining Cloud-Native Services

While we all use cloud services daily for both work and personal benefit, we typically don't give much thought to what actually goes on in the elusive place we fondly call "the cloud". For most people, "the cloud" means they are just using someone else’s computer. For most cloud services, this definition is a good enough, as we don't need to know, nor care, about what they do behind the scenes.

For cloud services delivering enterprise networking and security services, however, this matters a lot. The difference between a true cloud-native architecture and software simply deployed in a cloud environment, can have detrimental impact on the availability, stability, performance, and security of your enterprise.

Let's take a look at what cloud-native means, and the importance it plays in our network.

Cloud-Native – Single Pass Architecture

At the core of a cloud-native architecture lies the basic component which processes the service traffic and applies the service functionality and logic. A cloud service that needs to apply multiple processing engines, which is the case with SASE, will benefit from optimizing the way the traffic is managed and processed.

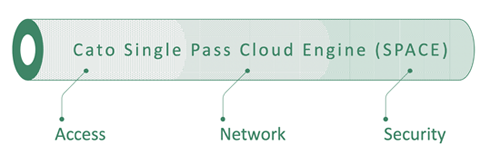

A true cloud-native service will converge all the required functionality into a single, atomic, processing unit. This means that encrypted traffic will be decrypted once for all engines, and that the different engines are run in parallel and share a single context which streamlines the processing flow. Overall, this dramatically reduces processing latency and enhances networking and security capabilities.

An example of this is the Cato Single Pass Cloud Engine (SPACE), which applies all SASE services in one unified processing unit:

Scalable Cloud-Native Services

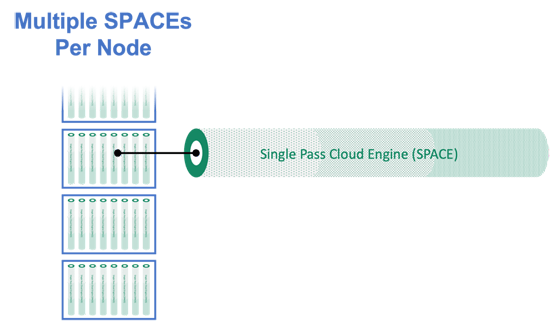

A cloud-native service needs to be agile and respond to changing conditions. If traffic levels rise, for example, the service needs to scale accordingly. A true cloud-native service will have the ability to continuously gauge service performance metrics and spin up additional processing units as needed.

Cato SASE Cloud PoPs (Point-of-Presences) are comprised of multiple processing nodes, each comprised of multiple SPACEs. Additional nodes can be spun up as needed.

Cloud-Native Service Resiliency

Beyond scalability, the above architecture enables the service to quickly overcome any possible issues. If any single SPACE unit fails, the service seamlessly changes traffic routes to other SPACEs. If an entire node fails, the service routes traffic to other nodes:

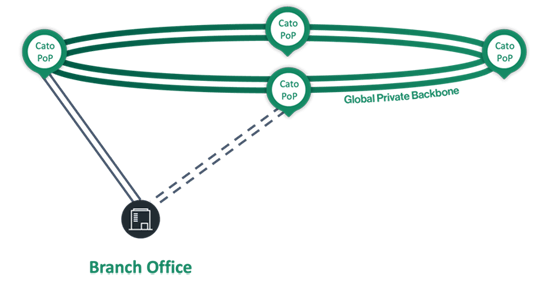

Beyond any failures within a PoP, it’s also critical to provide resiliency in case an entire PoP fails. A true cloud-native service will detect such a failure and immediately and seamlessly route all traffic to an alternative PoP. A SASE service with a large number of globally distributed PoPs will enable service continuity with minimal impact on latency and responsiveness.

Even if a PoP itself doesn’t fail, it may still become unreachable due to a network link failure. An architecture in which each PoP is connected by dual links from different providers helps ensure all PoPs are always accessible.

Cloud-Native Service Managed Service

Scalability and resiliency were not invented in the cloud. They can be achieved using point solutions deployed in on-premises locations as well. This, however, requires a lot of planning, continuous monitoring and maintenance, and taking calculated risks which can be, well, risky.

The required planning needs to consider current traffic and processing capacity. This can of course change as traffic requirements may grow over time, and new functionality, such as security modules, may be added, requiring additional processing power.

Purchasing an appliance which will not meet future requirements will result in a need to upgrade it, typically requiring a forklift upgrade which is expensive, risky and time consuming. There is also a need for HA planning and setting up in all locations.

To make sure none of the sites are running out of resources there’s a need to constantly monitor all sites and appliances. Every time the vendor of an appliance releases a new software version or security update, there’s a need to schedule a maintenance window and propagate these updates throughout the network. There’s also a need to make sure these updates do not negatively affect the appliance and service.

Summary

A true cloud-native service makes all the above work, risk and worries go away. A cloud-native service provider should manage all the above aspects and ensure all required resources are always available. It scales on-demand and adapts to traffic growth and newly added functionality. High availability is applied at all locations and for all links, ensuring the service is always up and performing optimally. All software upgrades and security updates are transparently applied and tested to make sure the service isn’t impacted.

The true value of a true cloud-native service is the ability it provides that enables enterprises to focus on their business; on the what, eliminating the overhead and risk of managing the how.

Wi-Fi Key Generator

Follow Firewall.cx

Cisco Password Crack

Decrypt Cisco Type-7 Passwords on the fly!